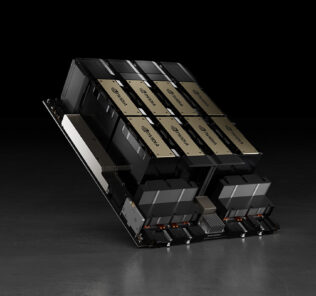

Baidu KUNLUN AI accelerator with 260 TOPS set for mass production next year

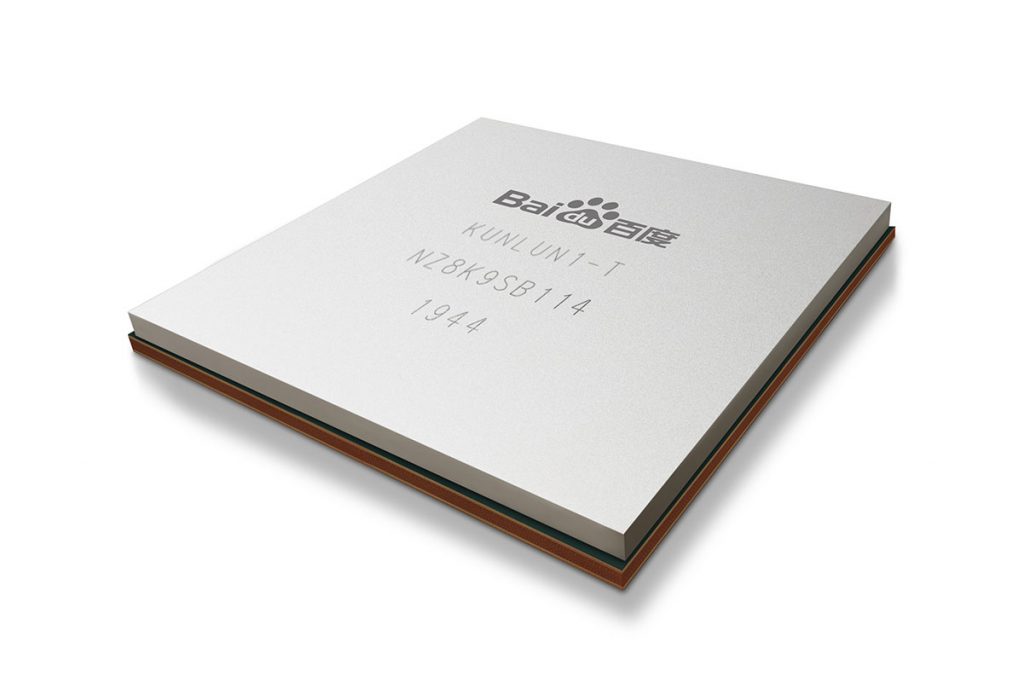

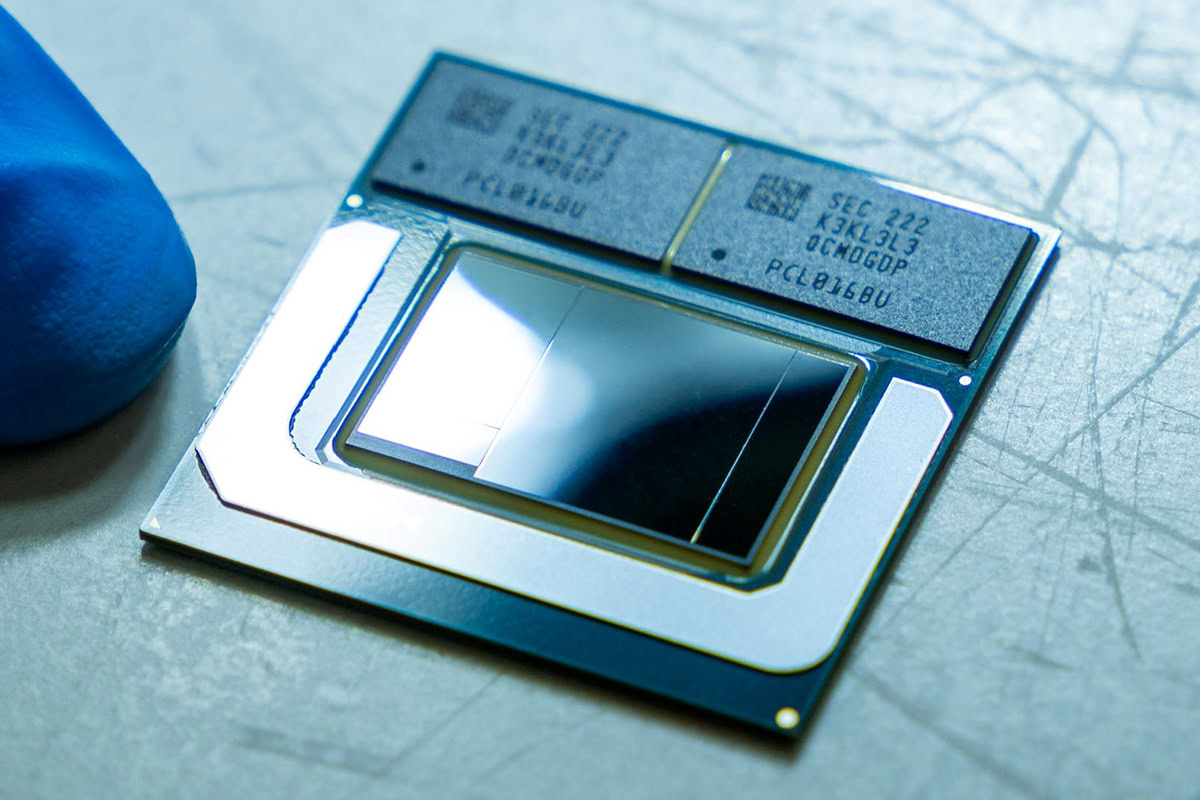

The Baidu KUNLUN has completed its development and will be mass produced early next year. The cloud-to-edge AI accelerator was developed by Baidu in partnership with Samsung Electronics, and will be manufactured on Samsung’s 14nm process and I-Cube packaging.

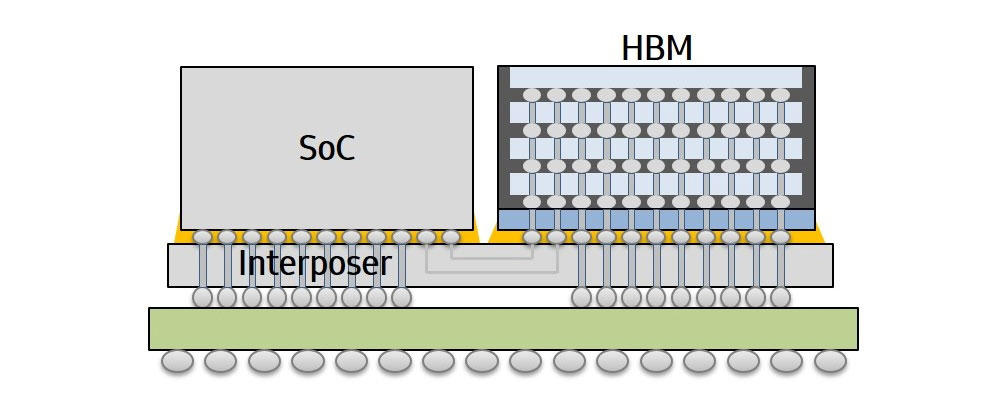

Samsung’s I-Cube technology connects a logic chip and high bandwidth (HBM) memory with an interposer, similar to how the AMD Vega GPUs are built. The logic chip in play here is Baidu’s own advanced XPU, their home-grown neural processor architecture, which communicates with the HBM at up to 512GBps.

Baidu will be using the chip for various AI workloads include search ranking, speech recognition, image processing, natural language processing, autonomous driving and natural language processing. All these applications are made possible by the Baidu KUNLUN’s excellent efficiency with up to 260 TOPS of performance at just 150W.

The new chip is reportedly three times faster than a conventional GPU/FPGA-accelerating model when used with Ernie, a pre-training model for natural language processing.

Pokdepinion: I honestly didn’t know Baidu was involved in chip design too.

Leave a Response