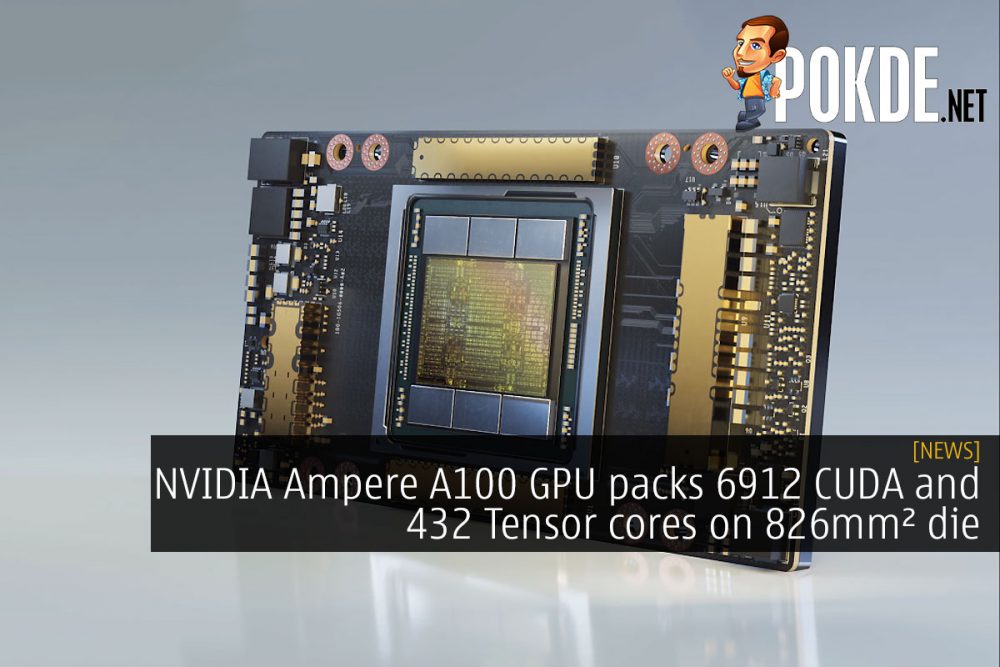

NVIDIA Ampere A100 GPU packs 6912 CUDA and 432 Tensor cores on 826mm² die

NVIDIA held their GPU Technology Conference (GTC) 2020 earlier, and they did launch the NVIDIA Ampere architecture, although you will probably have to wait a bit longer for them to unveil the gaming-oriented Ampere GPUs. This is the NVIDIA A100, a high-performance computing (HPC) GPU designed to accelerate AI and data analytics with unprecedented scalability.

The NVIDIA A100 GPU is a massive 826mm2 GPU packing 54.2 billion transistors. They have also stepped up from last-gen’s 12nm process to TSMC’s 7nm process, allowing for these gains in transistor density. The monstrous GPU is mated to 40GB of HBM2 via a 5120-bit memory bus, allowing for 1.6 TB/s of memory bandwidth.

All that memory bandwidth will probably be very helpful to keep the cores on the NVIDIA A100 busy. NVIDIA packed a whopping 6912 CUDA cores and 432 3rd Gen Tensor cores onto the A100 GPU. Four of these 3rd Gen Tensor cores offer 2x the raw fused multiply–add (FMA) computational power of eight Tensor cores in the last-gen GV100 GPU, so you are looking at more than double the FP16 Tensor performance in the NVIDIA A100, despite having fewer Tensor cores than its predecessor. NVIDIA Ampere will also feature support for more formats, TF32, bfloat16 and FP64. NVIDIA also introduced support for sparsity acceleration where the dataset can be halved to double the performance, with virtually no loss in inferencing accuracy.

For inter-GPU communications, the NVIDIA A100 supports NVLink 3, which is the latest iteration of the NVIDIA NVLink interconnect technology. It supports 12 NVLinks for a total bandwidth of 600GB/s, and it allows for 8 GPU configurations where every GPU is directly communicating with each other.

NVIDIA also added Multi-Instance GPU (MIG) with the NVIDIA A100, allowing you to partition a GPU into up to seven parts, letting multiple users run on specific slices of the GPU on separate SMs with dedicated L2 cache and memory, allowing for higher predictability than previous implementations. These configurations are slated to benefit cloud computing providers as they can allow for higher utilization of the GPUs. If you are interested in a more in-depth look at the architecture of the NVIDIA Ampere architecture, you can head on to this blog post.

NVIDIA Ampere A100 Specs

- A100, 108 SMs, TSMC 7nm

- 6912 CUDA cores

- 432 Tensor cores

- Up to 1410 GHz Boost

- 40GB HBM2 @ 2.4 Gbps

- 5120-bit memory bus, 1.6 TB/s bandwidth

- PCIe 4.0 x16

- 400W TDP

If you fancy yourself an NVIDIA Ampere GPU right now, you can already get them in the NVIDIA DGX A100 systems. Each of these pack eight NVIDIA A100 GPUs, two 64-core AMD Rome 7742 CPUs, 1TB RAM, 15TB of PCIe 4.0 storage and nine Mellanox ConnectX-6 200 Gbps network interfaces. Each of these cost a cool $199K (~RM864K), so have fun. These are already shipping.

Pokdepinion: I wonder if those Tensor improvements will come to the consumer segment to boost DLSS performance?

![[CES 2022] AMD Ryzen 6000 series mobile processors bring Zen 3+ CPU, RDNA 2 GPU on new 6nm node amd ryzen 6000 series mobile processor ryzen 7 5800x3d ryzen zen 4 amd am4 cover](https://pokde.net/assets/uploads/2022/01/amd-ryzen-6000-series-mobile-processor-ryzen-7-5800x3d-ryzen-zen-4-amd-am4-cover-1-316x296.jpg)

Leave a Response