Let’s Talk: Smartphone Camera’s Moonshot

Let’s Talk: Smartphone Camera’s Moonshot

I certainly don’t speak for everyone – but if you have been paying attention to any kind of recent smartphone releases, it’s almost an unspoken rule that every keynote you’ll see has its product managers or CEOs talking about how great the cameras are at taking low-light photos, enhance colors, and what-have-you (some companies even invented a word for it). As a person that don’t really care about camera quality, that makes me question: is smartphone cameras really that much more important than other aspects of the device?

The Basics Of Cameras

In a typical camera you have a few elements that make it work: CMOS sensor, lens, shutter and additional circuitry that connects them into a singular unit.

The CMOS sensor is the very thing that creates the image – it’s full of what we call “photodiodes” arranged in a pixel array with red, green and blue subpixels, just like your display. The bigger the sensor, the more light it absorbs and thus produces better colors; meanwhile, the lens is like a funnel, in which it directs the light to the appropriate focal length (zoom) and keeps things in focus – usually longer lenses gives you better zoom ranges.

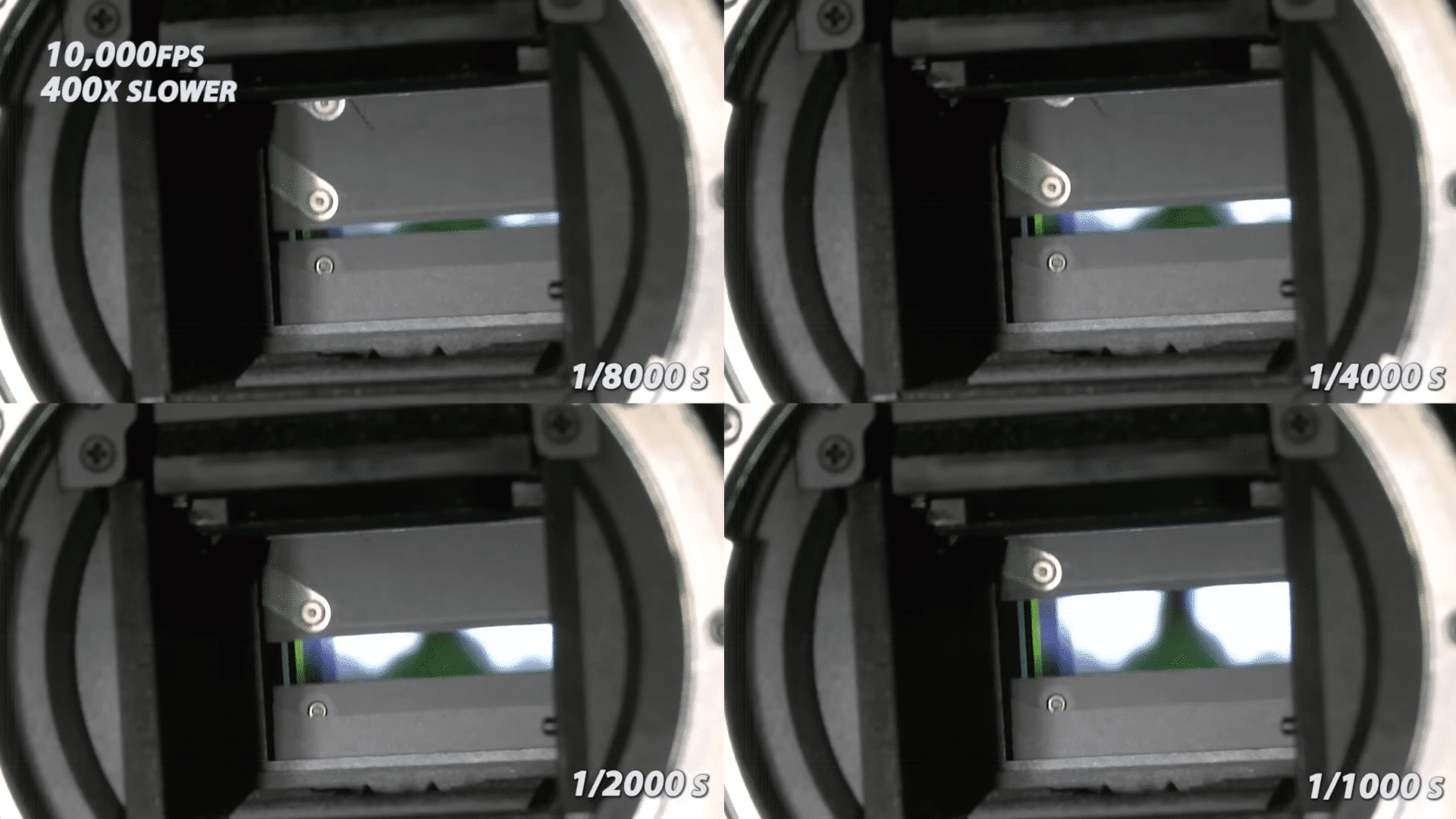

And then there’s shutter, which is a physical door placed right in front of the sensor and opens at a specific amounts of time which lets some amounts of light in to form an image. Opening it for too long and you get overexposed image, and closing it too quickly will end up with inadequate amounts of light in the resulting image. However, the confines of smartphone meant there’s no mechanical shutter – it is done electronically by regulating the power of the shutter at the specified duration just like its mechanical counterpart.

Some cameras also include a DRAM chip as dedicated memory to handle high speed photography, such as burst shots and high-speed recording (in excess of 960FPS). It functions similarly to SSDs where the DRAM will take in large streams of data and flushing it down to the main storage of the device.

Physics Says You Can’t Do That

Smartphones are incredibly thin compared to full-size mirrorless cameras (let alone DSLRs) – most don’t exceed 10mm thick, so all of elements above needs to cram inside the incredibly tight confines of the smartphone chassis. Remember when we mentioned bigger is better? That’s exactly where smartphones has began to hit a wall in recent years. (It has also likely resulted in the ever increasing sizes of camera bumps in recent years.)

Periscope cameras were born to solve the zoom problem. Cameras used to max out at 2x optically, which can be limiting if someone wants to capture photos from large distances without losing quality through digital zoom. That has since been expanded into 5x and even 10x on some phones – which is enough to cover the realistic photo capturing distance in most scenarios.

However, sensors can’t quite expand as the way lenses do. While recent smartphones are touting the use of 1-inch sensors, they are not actually physically 1-inch in size (its physical size is ~0.63 inch). The reason why it’s called as it is has to do with old camera sensor technologies, which is explained here.

Even so, the spaces within smartphones are very valuable for chips and sensors to utilize – which is part of the reason we lost things like 3.5mm jack in the first place. You can’t just put larger and larger sensors (such as APS-C) into a limited space, so what happens next?

Enter computational photography – or “AI” as most smartphone manufacturers like to call it.

Overcompensating?

Here’s where things get muddied a little bit. “AI” is an incredibly broad term to use, some may call it good-old algorithm, others may call it machine learning. Manufacturers don’t usually publicize how does its process the raw images taken from the sensor in detail, it’s mostly just rather vague “brighter colors, more depth” kind of deal. Some photography enthusiasts has argued that photos taken from a smartphone isn’t necessarily “real” due to the way they are processed.

They may have a point, though. Recently Samsung has come under fire (yet again) for the “Space Zoom” feature – first introduced in Galaxy S20 Ultra – that practically “recreates” the image of the moon rather than just enhancing it, going by the observations made from this Reddit post. However, they’re not the first offender in this shenanigans: that award actually goes to Huawei (and Honor, as its subsidiary back then) – with its P30 series all the way back in 2019.

Here’s the question: do you consider that moon really shot by yourself? Or is it just generated from AI directly from the device itself? Both vendors in their own cases has offered rather vague explanations on how their respective features work, but some argued that having the AI to effectively replace the moon doesn’t sound like a moon that a user has taken by themselves.

Still, in both cases there’s a silver lining at least. Samsung has clarified that by turning off Scene Optimizer feature it’ll disable the post-processing and that way you get what you see; Huawei has clarified as well in that particular case back in 2019 with the feature being optional.

In the end, it’s all about preference – some may prefer to have the phone’s AI to handle the heavy lifting, while others prefer to have an unprocessed image to add their artistic styles into it (or just prefers a real, untapped image). The important thing, as always, is to give the choice to everyone. And perhaps – more R&D resources can be put into a better hardware and software experience, but I digress.

Info Source: LaptopMag (1, 2) | The Slo Mo Guys (YouTube) | u/ibreakphotos (r/Android) | Android Authority | The Verge | Samsung

(FYI: “Moonshot” is a term that was popularized by NASA’s moon landing missions some 50 years ago.)